State of the Art

A common question we receive: "Why build specialized sign language technology when large language models are improving so rapidly?"

This document demonstrates why general-purpose AI models—despite their impressive capabilities in text and image generation—fundamentally fail at sign language translation. The gap between current AI capabilities and functional sign language translation is exactly the opportunity InReach addresses.

Why General AI Models Fail at Sign Language

Sign language is not simply "gestures" that can be generated from text descriptions. It's a complete language with:

- Phonological structure: Handshape, location, movement, orientation, non-manual features

- Spatial grammar: 3D space used for reference, agreement, classifiers

- Simultaneity: Multiple articulators conveying different information at once

- Linguistic precision: Small differences in hand position change meaning entirely

General AI models treat sign language as a visual pattern to mimic, not a language to translate. This is why they fail consistently.

Spoken-to-Signed Translation

Video Generation Models

State-of-the-art video generation models (Veo 2, Sora) can create photorealistic humans performing movements—but they cannot generate linguistically accurate sign language.

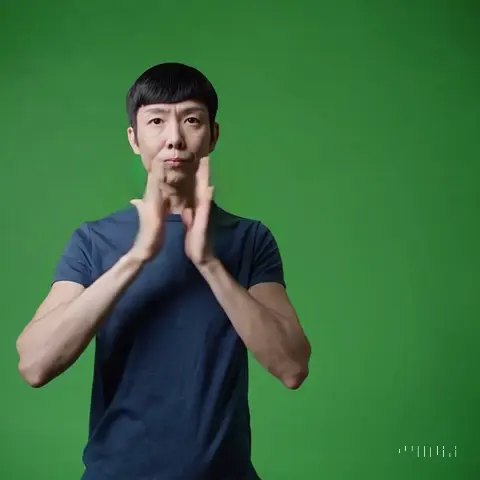

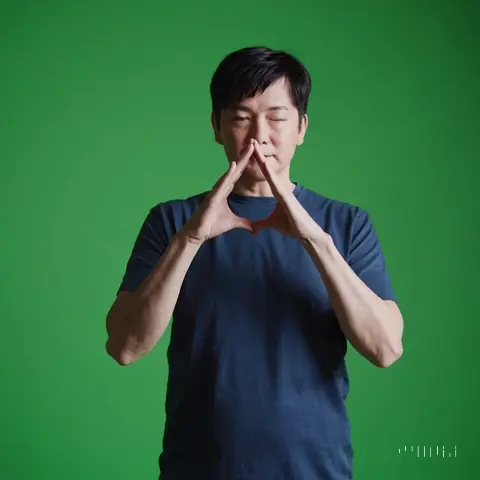

Google Veo 2 (2025/05/10)

Prompt

Sign language interpreter, green screen background, signing the American Sign Language sign for "House".

| Video 1 | Video 2 |

|---|---|

|  |

|  |

Result: Generates plausible-looking hand movements, but not the actual ASL sign for "House" (which requires two flat hands forming a roof shape).

OpenAI Sora (2024/12/14)

Prompt

Sign language interpreter, green screen background, signing the American Sign Language sign for "House".

Settings

- Aspect ratio: 1:1

- Resolution: 480p

- Duration: 5 seconds

- Quantity: 2

| Video 1 | Video 2 |

|---|---|

|  |

Result: Similar failure—generates hand movements that look sign-like but are linguistically incorrect.

Attempting Movement Descriptions

When we provide detailed movement instructions (using signwriting-description) rather than asking for translation:

Prompt

Sign language interpreter, green screen background, performing an American Sign Language sign. With your dominant hand open, touch your forehead and move your hand away, palm facing out.

| Video 1 | Video 2 |

|---|---|

|  |

Result: Better adherence to instructions, but still under-specified—the model doesn't understand that hand orientation, speed, and facial expression are part of the linguistic sign for "Hello."

Why this matters: Even if you could describe every phonological feature in text (you can't—it would take paragraphs per sign), the model has no understanding of sign language grammar, non-manual features, or spatial relationships.

Image Generation Models

Image models are more mature than video models, but face the same fundamental problem: they generate visual patterns, not linguistic structures.

OpenAI DALL-E 3 via ChatGPT 4o (2024/12/08)

ChatGPT 4o Prompt

Sign language interpreter, green screen background, signing the American Sign Language sign for "House".

ChatGPT reformulates the prompt for DALL-E 3:

DALL-E 3 Prompt

A professional sign language interpreter signing the American Sign Language (ASL) sign for 'House' with clear and accurate hand positioning. The background is a plain green screen, suitable for chroma keying. The interpreter is dressed in neutral, professional attire, and the scene is well-lit to ensure clarity of the sign and facial expressions.

Result: Wrong handshapes, incorrect positioning, not a linguistically valid sign. The model has no training data mapping English words to ASL signs.

StabilityAI Stable Diffusion 3.5 Large (2024/12/08)

Prompt

Sign language interpreter, green screen background, signing the American Sign Language sign for "House".

Result: Similar failure—generates plausible-looking hand configurations that are not the actual sign.

Google DeepMind Imagen 3 (2024/12/05)

Result: The model refuses to generate images of sign language signs entirely.

Why these models fail: They are trained on internet images with text captions. There are virtually no labeled datasets of "ASL sign for [word]" at the scale needed to train these models. Sign language exists primarily in video, not static images with text labels.

Signed-to-Spoken Translation

OpenAI

OpenAI models (GPT-4o, GPT-4 Turbo) do not currently support video inputs, making sign language recognition impossible.

Meta

Meta is conducting research on sign language translation[1], but their models are not publicly available and remain research-only.

Google

Google is also conducting research on sign language translation[2], but publicly available models fail dramatically at the task.

Google Gemini 1.5 Pro (2024/12/08)

We tested Gemini 1.5 Pro with a video of a woman signing "What is your name?" in ASL.

Prompt + Attached video "example-sentence.mp4"

Translate the attached American Sign Language video "example-sentence.mp4" into English.

Gemini 1.5 Pro responses (5 attempts):

- "I'm sorry, I don't understand."

- "Excuse me. I have a question."

- "Hello, my name is [name]. Nice to meet you."

- "Excuse me. Do you mind if I sit here?"

- "Excuse me. Do you have a second? Do you mind if I ask you a question?"

Actual meaning: "What is your name?"

Gemini 2.0 Flash (2024/12/15) responses:

- "Don't understand."

- "Stop... I am thinking about this... I'm not sure."

- "Stop, I think I'm done?"

- "I don't know."

- "I don't understand."

Analysis: The model is hallucinating English text with no relationship to the actual signing. It cannot recognize individual signs, let alone understand sign language grammar or translate fluently.

Why this fails: General vision-language models are trained on internet videos with spoken audio or text captions. They have no training data for sign language video → text translation. They literally cannot "see" sign language as language—only as hand movements.

The Specialized Approach: Why InReach Works

General AI models fail at sign language because they:

- Lack sign language training data at scale

- Treat signing as visual patterns, not linguistic structures

- Cannot handle spatial grammar or multi-channel simultaneity

- Have no understanding of phonology (handshape, location, movement, orientation)

- Cannot generate or recognize non-manual features (facial grammar)

InReach's specialized approach:

Spoken-to-Signed Pipeline

- Text → SignWriting translation: Uses machine translation models trained on parallel sign language corpora

- SignWriting → Pose generation: Converts linguistic notation to 3D skeletal poses

- Pose → Video rendering: Generates photorealistic or avatar-based signing from poses

Why this works: By using SignWriting as an intermediate representation, we separate the translation problem (language-to-language) from the rendering problem (notation-to-video). General AI models try to solve both simultaneously and fail at both.

Signed-to-Spoken Pipeline

- Video → Pose estimation: MediaPipe Holistic extracts 543 3D keypoints

- Pose → SignWriting transcription: Trained models recognize signs from pose sequences

- SignWriting → Text translation: Machine translation to spoken language

Why this works: We use pose estimation to reduce the visual complexity, then apply linguistic models trained on sign language data. General AI models try to go directly from pixels to text and hallucinate.

The Competitive Moat

Question: "Won't OpenAI/Google/Meta eventually solve this?"

Answer: Yes, eventually—but not soon, and not better than specialized solutions. Here's why:

1. Data Scarcity

- General models need millions of labeled examples

- Sign language video data is scarce and expensive to annotate

- Even Meta/Google's research efforts struggle with limited datasets

2. Linguistic Complexity

- Sign languages are not "gestural encodings" of spoken languages

- Spatial grammar, non-manual features, classifiers require linguistic understanding

- General models don't have the architecture to handle multi-channel simultaneity

3. Deployment Model

- Even if they achieve good translation, they'll offer it as cloud APIs

- InReach's client-side processing provides privacy, offline capability, and zero server costs

- Our deployment model (browser extension, zero redesign) is a competitive advantage independent of translation quality

4. Specialization Beats Generalization

- Medical imaging AI beats general vision models for diagnosis

- Code-specific models (GitHub Copilot) beat general LLMs for programming

- Sign language requires specialized architecture, data, and evaluation

Timeline estimate: General AI companies might achieve functional sign language translation in 3-5 years. By then, InReach will have:

- Millions of users

- Partnerships with major platforms

- Proprietary training data from user interactions

- 3-5 year technical lead in specialized architectures

Conclusion

The state-of-the-art in general AI demonstrates exactly why InReach exists:

✅ General models cannot translate sign language despite billions in R&D

✅ Specialized approaches work (proven in academic research)

✅ The gap between general AI and functional sign language translation is our opportunity

✅ Our deployment model (client-side, universal) provides a moat even as models improve

We're not building sign language technology because general AI is failing.

We're building it because sign language is a complete language that requires specialized, linguistically-informed approaches.

And we're building it now because the technology stack (MediaPipe, TensorFlow.js, Transformers, SignWriting) has finally matured enough to make real-time, client-side translation possible.

Rust et al. 2024. Towards Privacy-Aware Sign Language Translation at Scale. ↩︎

Zhang et al. 2024. Scaling Sign Language Translation. ↩︎